Ms. An (Meeting Students’ Academic Needs): A socially adaptive robot Tutor for Student Engagement in Math Education

- 59 views

Tuesday, March 27, 2018 - 10:00 am

Meeting room 2265, Innovation Center

DISSERTATION DEFENSE

Author : Karina Liles

Advisor : Dr. Jenay Beer

Abstract

This research presents a new, socially adaptive robot tutor, Ms. An (Meeting Students’ Academic Needs). The goal of this research was to use a decision tree model to develop a socially adaptive robot tutor that predicted and responded to student emotion and performance to actively engage students in math education. The novelty of this multi-disciplinary project is the combination of the fields of HRI, AI, and education. In this study we 1) implemented a decision tree model to classify student emotion and performance for use in adaptive robot tutoring-an approach not applied to educational robotics; 2) presented an intuitive interface for seamless robot operation by novice users; and 3) applied direct human teaching methods (guided practice and progress monitoring) for a robot tutor to engage students in math education.

Twenty 4th and 5th grade students in rural South Carolina participated in a between subjects study with two conditions: A) with a non-adaptive robot (control group); and B) with a socially adaptive robot (adaptive group). Students engaged in two one-on-one tutoring sessions to practice multiplication per the South Carolina 4th and 5th grade math state standards.

Although our decision tree models were less optimal than we had hoped, the results gave answers to our current questions and clarity for future directions. Our adaptive strategies to engage students academically were effective. Further, all students enjoyed working with the robot and we did not see a difference in emotional engagement across the two groups. Our adaptive strategies made students think more deeply about their work and focus more.

This study offered insight for developing a socially adaptive robot tutor to engage students academically and emotionally while practicing multiplication. Results from this study will inform the human-robot interaction (HRI) and artificial intelligence (AI) communities on best practices and techniques within the scope of this work.

Date : March 27th, 2018

Time : 10:00 am

Place : Meeting room 2265, Innovation Center

DISSERTATION DEFENSE

Author : Karina Liles

Advisor : Dr. Jenay Beer

Abstract

This research presents a new, socially adaptive robot tutor, Ms. An (Meeting Students’ Academic Needs). The goal of this research was to use a decision tree model to develop a socially adaptive robot tutor that predicted and responded to student emotion and performance to actively engage students in math education. The novelty of this multi-disciplinary project is the combination of the fields of HRI, AI, and education. In this study we 1) implemented a decision tree model to classify student emotion and performance for use in adaptive robot tutoring-an approach not applied to educational robotics; 2) presented an intuitive interface for seamless robot operation by novice users; and 3) applied direct human teaching methods (guided practice and progress monitoring) for a robot tutor to engage students in math education.

Twenty 4th and 5th grade students in rural South Carolina participated in a between subjects study with two conditions: A) with a non-adaptive robot (control group); and B) with a socially adaptive robot (adaptive group). Students engaged in two one-on-one tutoring sessions to practice multiplication per the South Carolina 4th and 5th grade math state standards.

Although our decision tree models were less optimal than we had hoped, the results gave answers to our current questions and clarity for future directions. Our adaptive strategies to engage students academically were effective. Further, all students enjoyed working with the robot and we did not see a difference in emotional engagement across the two groups. Our adaptive strategies made students think more deeply about their work and focus more.

This study offered insight for developing a socially adaptive robot tutor to engage students academically and emotionally while practicing multiplication. Results from this study will inform the human-robot interaction (HRI) and artificial intelligence (AI) communities on best practices and techniques within the scope of this work.

Date : March 27th, 2018

Time : 10:00 am

Place : Meeting room 2265, Innovation Center COLLOQUIUM

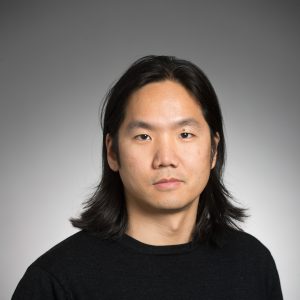

Guorong Wu

Abstract

Neuroimaging research has developed rapidly in last decade, with various applications of brain mapping technologies that provide mechanisms for discovering neuropsychiatric disorders in vivo. The human brain is something of an enigma. Much is known about its physical structure, but how it manages to marshal its myriad components into a powerhouse capable of performing so many different tasks remains a mystery. In this talk, I will demonstrate that it is more important to understand how the brain regions are connected rather than study each brain region individually. I will introduce my recent research on human brain connectome, with the focus on revealing high-order brain connectome and functional dynamics using learning-based approaches, and the successful applications in identifying neuro-disorder subjects such as Autism and Alzheimer’s disease.

Dr. Guorong Wu is an Assistant Professor in Department of Radiology at the University of North Carolina, Chapel Hill. His primary research interests are medical image analysis, big data mining, scientific data visualization, and computer-assisted diagnosis. He has been working on medical image analysis since he started my Ph.D. study in 2003. In 2007, he received the Ph.D. degree in computer science and engineering from Shanghai Jiao Tong University, Shanghai, China. He has developed many image processing methods for brain magnetic resonance imaging (MRI), diffusion tensor imaging, breast dynamic contrast-enhanced MRI (DCE-MRI), and computed tomography (CT) images. These cutting-edge computational methods have been successfully applied to the early diagnosis of Alzheimer’s disease, infant brain development study, and image-guided lung cancer radiotherapy. Meanwhile, Dr. Wu lead a multi- discipline research team in UNC which aims to translate the cutting-edge intelligent techniques to the imaging-based biomedical applications, for the sake of boosting translational medicine. Dr. Wu has released more than ten image analysis software packages to the medical imaging community, which count to totally more than 15,000 downloads since 2009.

Dr. Wu is the recipient of NIH Career Development Award (K01) and PI of NIH Exploratory/Developmental Research Grant Award (R21). He also serves as the Co-PI and Co-Investigator in other NSF and NIH grants.

Date: Mar. 26 2018

Time: 10:15-11:15 am

Place: Innovation Center, Room 2277

COLLOQUIUM

Guorong Wu

Abstract

Neuroimaging research has developed rapidly in last decade, with various applications of brain mapping technologies that provide mechanisms for discovering neuropsychiatric disorders in vivo. The human brain is something of an enigma. Much is known about its physical structure, but how it manages to marshal its myriad components into a powerhouse capable of performing so many different tasks remains a mystery. In this talk, I will demonstrate that it is more important to understand how the brain regions are connected rather than study each brain region individually. I will introduce my recent research on human brain connectome, with the focus on revealing high-order brain connectome and functional dynamics using learning-based approaches, and the successful applications in identifying neuro-disorder subjects such as Autism and Alzheimer’s disease.

Dr. Guorong Wu is an Assistant Professor in Department of Radiology at the University of North Carolina, Chapel Hill. His primary research interests are medical image analysis, big data mining, scientific data visualization, and computer-assisted diagnosis. He has been working on medical image analysis since he started my Ph.D. study in 2003. In 2007, he received the Ph.D. degree in computer science and engineering from Shanghai Jiao Tong University, Shanghai, China. He has developed many image processing methods for brain magnetic resonance imaging (MRI), diffusion tensor imaging, breast dynamic contrast-enhanced MRI (DCE-MRI), and computed tomography (CT) images. These cutting-edge computational methods have been successfully applied to the early diagnosis of Alzheimer’s disease, infant brain development study, and image-guided lung cancer radiotherapy. Meanwhile, Dr. Wu lead a multi- discipline research team in UNC which aims to translate the cutting-edge intelligent techniques to the imaging-based biomedical applications, for the sake of boosting translational medicine. Dr. Wu has released more than ten image analysis software packages to the medical imaging community, which count to totally more than 15,000 downloads since 2009.

Dr. Wu is the recipient of NIH Career Development Award (K01) and PI of NIH Exploratory/Developmental Research Grant Award (R21). He also serves as the Co-PI and Co-Investigator in other NSF and NIH grants.

Date: Mar. 26 2018

Time: 10:15-11:15 am

Place: Innovation Center, Room 2277 COLLOQUIUM

COLLOQUIUM

Qiang Zeng

Abstract

By applying out-of-the-box thinking and cross-area approaches, novel solutions can be innovated to solve challenging problems. In this talk, I will share my experiences in applying cross-area approaches, and present creative designs to solve two difficult security problems.

Problem 1: Decentralized Android Application Repackaging Detection. An unethical developer can download a mobile application, make arbitrary modifications (e.g., inserting malicious code and replacing the advertisement library), repackage the app, and then distribute it; the attack is called application repackaging. Such attacks have posed severe threats, causing $14 billion monetary loss annually and propagating over 80% of mobile malware. Existing countermeasures are mostly centralized and imprecise. We consider building the repackaging detection capability into apps, such that user devices are made use of to detect repackaging in a decentralized fashion. In order to protect repackaging detection code from attacks, we propose a creative use of logic bombs, which are commonly used in malware. The use of hacking techniques for benign purposes has delivered an innovative and effective defense technique. Problem 2: Precise Binary Code Semantics Extraction. Binary code analysis allows one to analyze a piece of binary code without accessing the corresponding source code. It is widely used for vulnerability discovery, dissecting malware, user-side crash analysis, etc. Today, binary code analysis becomes more important than ever. With the booming development of the Internet-of-Things industry, a sheer number of firmware images of IoT devices can be downloaded from the Internet. It raises challenges for researchers, third-party companies, and government agents to analyze these images at scale, without access to the source code, for identifying malicious programs, detecting software plagiarism, and finding vulnerabilities. I will introduce a brand new binary code analysis technique that learns from Natural Language Processing, an area remote from code analysis, to extract useful semantic information from binary code.

Dr. Qiang Zeng is an Assistant Professor in the Department of Computer & Information Sciences at Temple University. He received his Ph.D. in Computer Science and Engineering from the Pennsylvania State University, and his B.E. and M.E. degrees in Computer Science and Engineering from Beihang University, China. He has rich industry experiences and ever worked in the IBM T.J. Watson Research Center, the NEC Lab America, Symantec and Yahoo.

Dr. Zeng’s main research interest is Systems and Software Security. He currently works on IoT Security, Mobile Security, and deep learning for solving security problems. He has published papers in PLDI, NDSS, MobiSys, CGO, DSN and TKDE.

Date: Mar. 21 2018

Time: 10:15-11:15 am

Place: Innovation Center, Room 2277

Qiang Zeng

Abstract

By applying out-of-the-box thinking and cross-area approaches, novel solutions can be innovated to solve challenging problems. In this talk, I will share my experiences in applying cross-area approaches, and present creative designs to solve two difficult security problems.

Problem 1: Decentralized Android Application Repackaging Detection. An unethical developer can download a mobile application, make arbitrary modifications (e.g., inserting malicious code and replacing the advertisement library), repackage the app, and then distribute it; the attack is called application repackaging. Such attacks have posed severe threats, causing $14 billion monetary loss annually and propagating over 80% of mobile malware. Existing countermeasures are mostly centralized and imprecise. We consider building the repackaging detection capability into apps, such that user devices are made use of to detect repackaging in a decentralized fashion. In order to protect repackaging detection code from attacks, we propose a creative use of logic bombs, which are commonly used in malware. The use of hacking techniques for benign purposes has delivered an innovative and effective defense technique. Problem 2: Precise Binary Code Semantics Extraction. Binary code analysis allows one to analyze a piece of binary code without accessing the corresponding source code. It is widely used for vulnerability discovery, dissecting malware, user-side crash analysis, etc. Today, binary code analysis becomes more important than ever. With the booming development of the Internet-of-Things industry, a sheer number of firmware images of IoT devices can be downloaded from the Internet. It raises challenges for researchers, third-party companies, and government agents to analyze these images at scale, without access to the source code, for identifying malicious programs, detecting software plagiarism, and finding vulnerabilities. I will introduce a brand new binary code analysis technique that learns from Natural Language Processing, an area remote from code analysis, to extract useful semantic information from binary code.

Dr. Qiang Zeng is an Assistant Professor in the Department of Computer & Information Sciences at Temple University. He received his Ph.D. in Computer Science and Engineering from the Pennsylvania State University, and his B.E. and M.E. degrees in Computer Science and Engineering from Beihang University, China. He has rich industry experiences and ever worked in the IBM T.J. Watson Research Center, the NEC Lab America, Symantec and Yahoo.

Dr. Zeng’s main research interest is Systems and Software Security. He currently works on IoT Security, Mobile Security, and deep learning for solving security problems. He has published papers in PLDI, NDSS, MobiSys, CGO, DSN and TKDE.

Date: Mar. 21 2018

Time: 10:15-11:15 am

Place: Innovation Center, Room 2277 COLLOQUIUM

COLLOQUIUM

Speaker: Heewook Lee

Abstract: Genetic diversity is necessary for survival and adaptability of all forms of life. The importance of genetic diversity is observed universally in humans to bacteria. Therefore, it is a central challenge to improve our ability to identify and characterize the extent of genetic variants in order to understand the mutational landscape of life. In this talk, I will focus on two important instances of genetic diversity found in (1) human genomes (particularly the human leukocyte antigens—HLA) and (2) bacterial genomes (rearrangement of insertion sequence [IS] elements). I will first show that specific graph data structures can naturally encode high levels of genetic variation, and I will describe our novel, efficient graph-based computational approaches to identify genetic variants for both HLA and bacterial rearrangements. Each of these methods is specifically tailored to its own problem, making it possible to achieve the state-of-the-art performance. For example, our method is the first to be able to reconstruct full-length HLA sequences from short-read sequence data, making it possible to discover novel alleles in individuals. For IS element rearrangement, I used our new approach to provide the first estimate of genome-wide rate of IS-induced rearrangements including recombination. I will also show the spatial patterns and the biases that we find by analyzing E. coli mutation accumulation data spanning over 2.2 million generations. These graph-centric ideas in our computational approaches provide a foundation for analyzing genetically heterogeneous populations of genes and genomes, and provide directions for ways to investigate other instances of genetic diversity found in life.

Bio: Dr. Heewook Lee is currently a Lane Fellow at Computational Biology Department at the School of Computer Science in Carnegie Mellon University, where he works on developing novel assembly algorithms for reconstructing highly diverse immune related genes, including human leukocyte antigens. He received a B.S. in computer science from Columbia University, and obtained M.S. and Ph.D in computer science from Indiana University. Prior to his graduate studies, he also worked as a bioinformatics scientist at a sequencing center/genomics company where he was in charge of the computational unit responsible for carrying out various microbial genome projects and Korean Human Genome project.

Mar. 16 2018

Location: Innovation Center, Room 2277

Time: 10:15 - 11:15 AM

Speaker: Heewook Lee

Abstract: Genetic diversity is necessary for survival and adaptability of all forms of life. The importance of genetic diversity is observed universally in humans to bacteria. Therefore, it is a central challenge to improve our ability to identify and characterize the extent of genetic variants in order to understand the mutational landscape of life. In this talk, I will focus on two important instances of genetic diversity found in (1) human genomes (particularly the human leukocyte antigens—HLA) and (2) bacterial genomes (rearrangement of insertion sequence [IS] elements). I will first show that specific graph data structures can naturally encode high levels of genetic variation, and I will describe our novel, efficient graph-based computational approaches to identify genetic variants for both HLA and bacterial rearrangements. Each of these methods is specifically tailored to its own problem, making it possible to achieve the state-of-the-art performance. For example, our method is the first to be able to reconstruct full-length HLA sequences from short-read sequence data, making it possible to discover novel alleles in individuals. For IS element rearrangement, I used our new approach to provide the first estimate of genome-wide rate of IS-induced rearrangements including recombination. I will also show the spatial patterns and the biases that we find by analyzing E. coli mutation accumulation data spanning over 2.2 million generations. These graph-centric ideas in our computational approaches provide a foundation for analyzing genetically heterogeneous populations of genes and genomes, and provide directions for ways to investigate other instances of genetic diversity found in life.

Bio: Dr. Heewook Lee is currently a Lane Fellow at Computational Biology Department at the School of Computer Science in Carnegie Mellon University, where he works on developing novel assembly algorithms for reconstructing highly diverse immune related genes, including human leukocyte antigens. He received a B.S. in computer science from Columbia University, and obtained M.S. and Ph.D in computer science from Indiana University. Prior to his graduate studies, he also worked as a bioinformatics scientist at a sequencing center/genomics company where he was in charge of the computational unit responsible for carrying out various microbial genome projects and Korean Human Genome project.

Mar. 16 2018

Location: Innovation Center, Room 2277

Time: 10:15 - 11:15 AM Soteris Demetriou

Abstract

In contrast with traditional ubiquitous computing, mobile devices are now user-facing, more complex and interconnected. Thus they introduce new attack surfaces, which can result in severe private information leakage. Due to the rapid adoption of smart devices, there is an urgent need to address emerging security and privacy challenges to help realize the vision of a secure, smarter and personalized world.

In this talk, I will focus on the smartphone and its role in smart environments. First I will show how the smartphone's complex architecture allows third-party applications and advertising networks to perform inference attacks and compromise user confidentiality. Further, I will demonstrate how combining techniques from both systems and data sciences can help us build tools to detect such leakage. Second, I will show how a weak mobile application adversary can exploit vulnerabilities hidden in the interplay between smartphones and smart devices. I will then describe how we can leverage both strong mandatory access control and flexible user-driven access control to design practical and robust systems to mitigate such threats. I will conclude, by discussing how in the future I want to enable a trustworthy Internet of Things, focusing not only on strengthening smartphones, but also emerging intelligent platforms and environments (e.g. automobiles, smart buildings/cities), and new user interaction modalities in IoT (acoustic signals).

Soteris Demetriou is a Ph.D. Candidate in Computer Science at the University of Illinois at Urbana- Champaign. His research interests lie at the intersection of mobile systems and, security and privacy, with a current focus on smartphones and IoT environments. He discovered side-channels in the virtual process filesystem (procfs) of the Linux kernel that can be exploited by malicious applications running on Android devices; he built Pluto, an open-source tool for detection of sensitive user information collected by mobile apps; he designed security enhancements for the Android OS which enable mandatory and discretionary access control for external devices. His work incited security additions in the popular Android operating system, has received a distinguished paper award at NDSS, and is recognized by awards bestowed by Samsung Research America and Hewlett-Packard Enterprise. Soteris is a recipient of the Fulbright Scholarship, and in 2017 was selected by the Heidelberg Laureate Forum as one of the 200 most promising young researchers in the fields of Mathematics and Computer Science.

Date: Mar. 14, 2018

Time: 10:15-11:15 am

Place: Innovation Center, Room 2277

Soteris Demetriou

Abstract

In contrast with traditional ubiquitous computing, mobile devices are now user-facing, more complex and interconnected. Thus they introduce new attack surfaces, which can result in severe private information leakage. Due to the rapid adoption of smart devices, there is an urgent need to address emerging security and privacy challenges to help realize the vision of a secure, smarter and personalized world.

In this talk, I will focus on the smartphone and its role in smart environments. First I will show how the smartphone's complex architecture allows third-party applications and advertising networks to perform inference attacks and compromise user confidentiality. Further, I will demonstrate how combining techniques from both systems and data sciences can help us build tools to detect such leakage. Second, I will show how a weak mobile application adversary can exploit vulnerabilities hidden in the interplay between smartphones and smart devices. I will then describe how we can leverage both strong mandatory access control and flexible user-driven access control to design practical and robust systems to mitigate such threats. I will conclude, by discussing how in the future I want to enable a trustworthy Internet of Things, focusing not only on strengthening smartphones, but also emerging intelligent platforms and environments (e.g. automobiles, smart buildings/cities), and new user interaction modalities in IoT (acoustic signals).

Soteris Demetriou is a Ph.D. Candidate in Computer Science at the University of Illinois at Urbana- Champaign. His research interests lie at the intersection of mobile systems and, security and privacy, with a current focus on smartphones and IoT environments. He discovered side-channels in the virtual process filesystem (procfs) of the Linux kernel that can be exploited by malicious applications running on Android devices; he built Pluto, an open-source tool for detection of sensitive user information collected by mobile apps; he designed security enhancements for the Android OS which enable mandatory and discretionary access control for external devices. His work incited security additions in the popular Android operating system, has received a distinguished paper award at NDSS, and is recognized by awards bestowed by Samsung Research America and Hewlett-Packard Enterprise. Soteris is a recipient of the Fulbright Scholarship, and in 2017 was selected by the Heidelberg Laureate Forum as one of the 200 most promising young researchers in the fields of Mathematics and Computer Science.

Date: Mar. 14, 2018

Time: 10:15-11:15 am

Place: Innovation Center, Room 2277 Antonios Argyriou

Abstract

The next generation of cellular wireless communication systems (WCS) aspire to become a paradigm shift and not just an incremental version of existing systems. These systems will come along with several technical and conceptual advances resulting in an ecosystem that aims to deliver orders of magnitude higher performance (throughput, delay, energy). These systems will essentially serve as conduits among service/content providers and users, and are expected to support a significantly enlarged and diversified bouquet of applications.

In this talk we will first introduce the audience to the fundamental concepts of WCS that brought us to this day. Subsequently, we will identify the application trends that drive specific design choices of future WCS. Then, we will present a new idea for designing and optimizing future WCS that puts the specific application at the focus of our choices. The discussion will be based on two key application categories namely wireless monitoring, and video delivery. In the last part of this talk we will discuss how this paradigm, that elevates the role of the applications, opens up new directions for understanding, operating, and designing future WCS..

Dr. Antonios Argyriou received the Diploma in electrical and computer engineering from Democritus University of Thrace, Greece, in 2001, and the M.S. and Ph.D. degrees in electrical and computer engineering as a Fulbright scholar from the Georgia Institute of Technology, Atlanta, USA, in 2003 and 2005, respectively. Currently, he is an Assistant Professor at the department of electrical and computer engineering, University of Thessaly, Greece. From 2007 until 2010 he was a Senior Research Scientist at Philips Research, Eindhoven, The Netherlands where he led the research efforts on wireless body area networks. From 2004 until 2005, he was a Senior Engineer at Soft.Networks, Atlanta, GA. Dr. Argyriou currently serves in the editorial board of the Journal of Communications. He has also served as guest editor for the IEEE Transactions on Multimedia Special Issue on Quality-Driven Cross-Layer Design, and he was also a lead guest editor for the Journal of Communications, Special Issue on Network Coding and Applications. Dr. Argyriou serves in the TPC of several international conferences and workshops in the area of wireless communications, networking, and signal processing. His current research interests are in the areas of wireless communications, cross-layer wireless system design (with applications in video delivery, sensing, vehicular systems), statistical signal processing theory and applications, optimization, and machine learning. He is a Senior Member of IEEE.

Date: Mar. 12, 2018

Time: 10:15-11:15 am

Place: Innovation Center, Room 2277

Antonios Argyriou

Abstract

The next generation of cellular wireless communication systems (WCS) aspire to become a paradigm shift and not just an incremental version of existing systems. These systems will come along with several technical and conceptual advances resulting in an ecosystem that aims to deliver orders of magnitude higher performance (throughput, delay, energy). These systems will essentially serve as conduits among service/content providers and users, and are expected to support a significantly enlarged and diversified bouquet of applications.

In this talk we will first introduce the audience to the fundamental concepts of WCS that brought us to this day. Subsequently, we will identify the application trends that drive specific design choices of future WCS. Then, we will present a new idea for designing and optimizing future WCS that puts the specific application at the focus of our choices. The discussion will be based on two key application categories namely wireless monitoring, and video delivery. In the last part of this talk we will discuss how this paradigm, that elevates the role of the applications, opens up new directions for understanding, operating, and designing future WCS..

Dr. Antonios Argyriou received the Diploma in electrical and computer engineering from Democritus University of Thrace, Greece, in 2001, and the M.S. and Ph.D. degrees in electrical and computer engineering as a Fulbright scholar from the Georgia Institute of Technology, Atlanta, USA, in 2003 and 2005, respectively. Currently, he is an Assistant Professor at the department of electrical and computer engineering, University of Thessaly, Greece. From 2007 until 2010 he was a Senior Research Scientist at Philips Research, Eindhoven, The Netherlands where he led the research efforts on wireless body area networks. From 2004 until 2005, he was a Senior Engineer at Soft.Networks, Atlanta, GA. Dr. Argyriou currently serves in the editorial board of the Journal of Communications. He has also served as guest editor for the IEEE Transactions on Multimedia Special Issue on Quality-Driven Cross-Layer Design, and he was also a lead guest editor for the Journal of Communications, Special Issue on Network Coding and Applications. Dr. Argyriou serves in the TPC of several international conferences and workshops in the area of wireless communications, networking, and signal processing. His current research interests are in the areas of wireless communications, cross-layer wireless system design (with applications in video delivery, sensing, vehicular systems), statistical signal processing theory and applications, optimization, and machine learning. He is a Senior Member of IEEE.

Date: Mar. 12, 2018

Time: 10:15-11:15 am

Place: Innovation Center, Room 2277